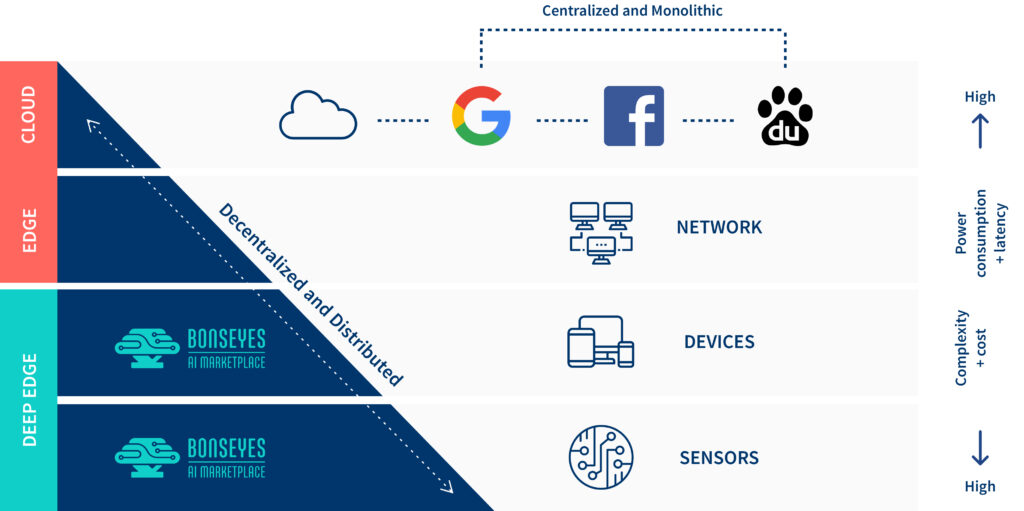

Role of different actors in AI Value Chain

A fully automated AI procurement process

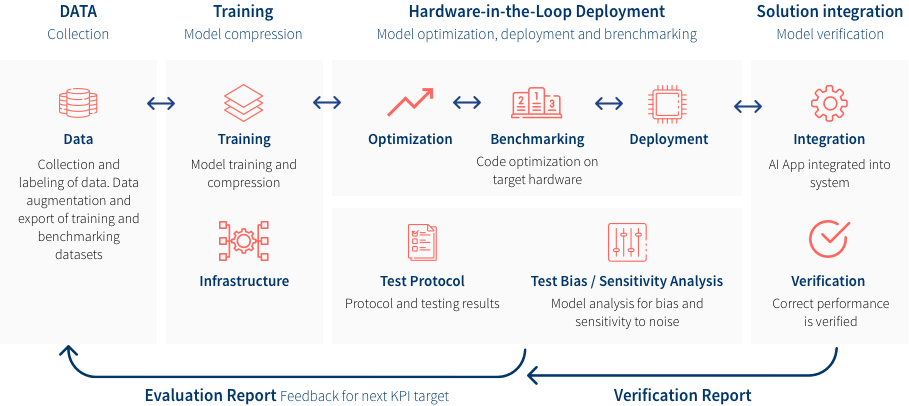

AI-App pipeline: integrating framework to bring data, algorithms, and deployment tools together.

You can now access the BonsAPPs MOOC online training courses available for free!

The BonsAPPs services will be accessible on the Bonseyes Marketplace and interoperable with the AI4EU community to respond to AI Challenges fitting the end users needs.

We propose an end-to-end AI pipeline to develop and deploy Deep Neural Network solutions on embedded devices. We propose a modular AI pipeline architecture which contains four modular main tasks:

Reduction in development time compared to monolithic system design methods

Reduction in cost of ownership related to training of deep learning models comparing to current training approaches designed for the cloud

Re-usability of AI assets/apps on the marketplace

Reduction in computational and memory requirement with current existing solutions for deep learning models on embedded systems

| Cookie | Duration | Description |

|---|---|---|

| cookielawinfo-checbox-analytics | 11 months | This cookie is set by GDPR Cookie Consent plugin. The cookie is used to store the user consent for the cookies in the category "Analytics". |

| cookielawinfo-checbox-functional | 11 months | The cookie is set by GDPR cookie consent to record the user consent for the cookies in the category "Functional". |

| cookielawinfo-checbox-others | 11 months | This cookie is set by GDPR Cookie Consent plugin. The cookie is used to store the user consent for the cookies in the category "Other. |

| cookielawinfo-checkbox-necessary | 11 months | This cookie is set by GDPR Cookie Consent plugin. The cookies is used to store the user consent for the cookies in the category "Necessary". |

| cookielawinfo-checkbox-performance | 11 months | This cookie is set by GDPR Cookie Consent plugin. The cookie is used to store the user consent for the cookies in the category "Performance". |

| viewed_cookie_policy | 11 months | The cookie is set by the GDPR Cookie Consent plugin and is used to store whether or not user has consented to the use of cookies. It does not store any personal data. |